Today I noticed the SIMD implementation of the Mandelbrot set algorithm I blogged about last year was successfully submitted to the language shootout webpage. However, I was a bit disappointed to see the C# version was still slower than the Java version, despite my use of the special SIMD instructions (though I was pleased that a quick port of my code to F# absolutely annihilates the OCAML version of this benchmark).

I had benchmarked my code as faster than the Java code on my Mac, the CentOS cluster at my work and two Ubuntu virtual machines (Mono >3.0, LLVM compiler). What gave?

Undeterred, I thought I would try to use SIMD to improve another C# benchmark, and took a shot at the N-body benchmark test. Once again, the version I wrote, in my hands, was much faster. But when submitted to the shoot-out and accepted, it was either much slower, or didn’t even run. My submission using the SSE instructions not only didn’t beat Java, it was actually slower than the original C# version!

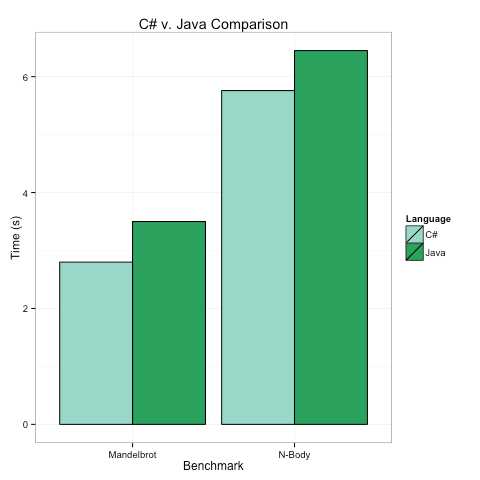

Below are the timings on my top-of-the-line Mac for the two benchmarks, in both cases we see that the C# program runs in 80-90% of the time the Java Program takes. There are several key take aways here.

C# and Java have no meaningful performance differences.

C# may use a lot less memory, but this is my optimized C# code and it is only beating the optimized Java by <= 20%. When does that matter?C# and Java’s similar performance has different underpinnings

There are a lot of ways code can be fast, and I was surprised that Java and C# achieve similar speeds in very different ways.

The key advantage of the C# code was the SIMD instructions, which in theory give a ~2X speed up. However, they only win by ~20%. Why?

I think the assembly gives the answer. Just some quick snippets tell the whole story. Here is some C# code for the N-Body problem compiled to assembly:

|

1 2 3 4 5 6 7 8 |

00000034 vsubpd 0x18(%esi), %xmm2, %xmm2 00000039 vmulpd %xmm1, %xmm1, %xmm3 0000003d vmulpd %xmm2, %xmm2, %xmm4 00000041 vhaddpd %xmm3, %xmm3, %xmm3 00000045 vhaddpd %xmm4, %xmm4, %xmm4 00000049 vaddpd %xmm4, %xmm3, %xmm3 0000004d vsqrtpd %xmm3, %xmm4 00000051 vmulpd %xmm4, %xmm3, %xmm3 |

The important insights into performance from the assembly here are:

- Similar instructions are stacked on top of each other, allowing for pipelining (e.g. the same vhaddpd instruction follows the same vhaddpd, and both use different registers, so can execute simultaneously).

- The “p” in the instructions (i.e. vhadd-“p”-d). This stands for “packed” meaning we are packing/doing two operations at once via SIMD.

- Only registers XMM1-XMM4 appear in the instructions. There are more registers available, and more pipelining possible, but the Mono/LLVM compiler appears to only use the low-number/scratch registers. It is easier to write compilers that obey this restriction.

Now let’s compare that to some Java assembly emitted by the Oracle runtime for the same benchmark:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

0x0000000109ce2315: vmulsd %xmm11,%xmm1,%xmm2 0x0000000109ce231a: vmulsd %xmm11,%xmm9,%xmm3 0x0000000109ce231f: vmulsd %xmm9,%xmm9,%xmm0 0x0000000109ce2324: vmulsd %xmm1,%xmm1,%xmm5 0x0000000109ce2328: vmovsd 0x38(%r12,%rdx,8),%xmm4 0x0000000109ce232f: vaddsd %xmm0,%xmm5,%xmm5 0x0000000109ce2333: vmovsd 0x30(%r12,%rdx,8),%xmm6 0x0000000109ce233a: vmovsd 0x28(%r12,%rdx,8),%xmm7 0x0000000109ce2341: vmovsd 0x20(%r12,%rbp,8),%xmm0 0x0000000109ce2348: vmovsd 0x40(%r12,%rbp,8),%xmm8 0x0000000109ce234f: vsubsd %xmm0,%xmm12,%xmm0 0x0000000109ce2353: vmulsd %xmm8,%xmm9,%xmm9 0x0000000109ce2358: vmulsd %xmm11,%xmm0,%xmm14 0x0000000109ce235d: vmulsd %xmm8,%xmm0,%xmm15 0x0000000109ce2362: vmulsd %xmm0,%xmm0,%xmm0 0x0000000109ce2366: vmulsd %xmm8,%xmm1,%xmm1 |

The important insights here are:

- Java uses the version of the instructions with “s” (i.e. vmul-“s”-d) meaning single, and gets no SSE advantage. No doubt this makes writing compilers easier.

- However, Java is using lots of registers, XMM15 shows up!

- As a result of using all the registers used, the Java code has great pipelining. Note that up to 5 vmulsd instructions show up at once. The JVM is simply brilliant at packing operations together, and this means that even though I, the programmer, was smart and used SSE2, my C# code only won by 20%, and not 200%. It’s hard to beat Java pipelining.

All of which makes me wonder. What if we took the high-level language advantages of C# (low-overhead value types, easy interop via pointers, baked in generics, etc.). And combined them with the low-level advantages of the JVM (better array bounds check elimination, pipelining compiler optimizations, and maybe someday the occasional stack allocation of reference type variables…)